Tech

Decoding the Role of 6v5m4xw in Digital Architecture

In the rapidly evolving landscape of digital information, alphanumeric sequences like 6v5m4xw serve as the essential connective tissue between software applications and their underlying data structures. While such a string may appear random to the casual observer, it often represents a highly specific point of reference within a database, a content management system, or a communication protocol. As our global infrastructure becomes more automated, the reliance on these unique signatures grows, allowing for the precise routing of information across vast networks without the risk of duplication or error.

The function of 6v5m4xw is deeply rooted in the concept of machine readability. In systems where millions of transactions occur every second, using human-descriptive titles is often inefficient and prone to ambiguity. Instead, developers and engineers utilize short, unique strings to tag session IDs, document versions, or specific hardware nodes. This systematic approach ensures that even if a system scales to include billions of records, each individual data packet remains instantly retrievable. By examining how these identifiers operate, we gain insight into the invisible logic that maintains the stability of our connected world.

The Evolution of Alphanumeric Indexing

The transition from physical record-keeping to digital databases necessitated a new way of organizing information. In the early days of computing, simple sequential numbering was often sufficient. However, as networks grew in complexity and became decentralized, the need for more robust identifiers emerged. This led to the adoption of alphanumeric strings, which provide a significantly larger pool of unique combinations within a compact format. A sequence like the one we are examining is a product of this evolution, offering a high degree of entropy that prevents “collisions” between different data points.

Modern indexing strategies often incorporate specific algorithms to generate these strings. These algorithms might include timestamps, server location codes, or random salt values to ensure that every generated ID is globally unique. This level of precision is critical for cloud computing, where data may be distributed across multiple continents simultaneously. By using standardized formats for identification, organizations can ensure that their systems remain interoperable, allowing different software platforms to exchange information seamlessly while maintaining the integrity of the original source.

Enhancing Performance in Large Scale Systems

One of the primary benefits of utilizing unique identifiers is the significant boost in system performance. When a database is queried using a specific string like 6v5m4xw, the search operation can be completed in a fraction of the time required for a text-based search. This is because these identifiers are typically stored in optimized indexes that allow the system to bypass irrelevant data and jump directly to the target record. For high-traffic websites and applications, this speed is the difference between a smooth user experience and a frustrating delay.

Furthermore, these identifiers facilitate better cache management. In a distributed network, frequently accessed information is often stored in temporary “cache” layers to reduce the load on the primary database. By using a consistent and unique key for each piece of content, the system can quickly determine if the requested data is already available in the cache. This reduces latency and minimizes the bandwidth required to serve requests. As data volumes continue to explode, the role of these efficient identifiers in maintaining system responsiveness cannot be overstated.

Ensuring Data Integrity and Traceability

Data integrity refers to the accuracy and consistency of information throughout its lifecycle. In complex environments, tracking the movement and transformation of data is a major challenge. Unique strings play a vital role here by acting as a digital fingerprint that follows a record through every stage of processing. If an error occurs or a data packet is lost, engineers can use the identifier to trace the issue back to its origin. This level of traceability is essential for auditing, troubleshooting, and maintaining high standards of quality control in software development.

In addition to traceability, these identifiers help prevent the accidental overwriting of data. In collaborative environments where multiple users or processes might be accessing the same dataset, the use of unique keys ensures that each modification is attributed to the correct version of the record. This is particularly important in version control systems and distributed ledgers, where the history of changes must be preserved with absolute certainty. By providing a stable point of reference, these alphanumeric codes provide the security and reliability needed for professional data management.

Security Implications of Digital Identifiers

Security is a paramount concern whenever data is transmitted or stored. While a sequence like 6v5m4xw is not a password, it often acts as a component of a secure access framework. For example, session tokens or API keys often take the form of unique alphanumeric strings. These tokens are used to verify that a request is coming from an authorized source without exposing sensitive user credentials. The complexity and length of these strings are designed to make them resistant to “brute force” attacks, where an intruder attempts to guess the identifier through trial and error.

Moreover, many modern systems employ a technique called “obfuscation” to protect their internal data structures. By using non-descriptive identifiers, developers can prevent attackers from gaining insights into the nature of the information being stored. For instance, an identifier that reveals a user’s name or a product category could be exploited by a malicious actor to map out a system’s vulnerabilities. By contrast, a neutral and abstract string provides no such information, adding an extra layer of defense to the overall security architecture.

Automation and the Role of Machine Intelligence

As we move toward a world driven by artificial intelligence and automated decision-making, the importance of structured data identifiers continues to grow. Machine learning models require massive amounts of data to train effectively, and this data must be organized in a way that the algorithms can easily digest. Unique identifiers allow these models to link disparate datasets, creating a more comprehensive view of the information. For example, an AI might use a specific string to correlate a user’s browsing behavior with their purchase history across different platforms.

Automation also extends to the generation and management of the identifiers themselves. Autonomous agents can now monitor system health, identify bottlenecks, and reallocate resources without human intervention. These agents use unique keys to identify which processes are running and where they are located in the network. This level of automation is only possible because of the underlying structure provided by consistent identification standards. As systems become more self-aware and self-correcting, the reliance on these digital anchors will only become more profound.

Interoperability Across Global Platforms

In a fragmented digital ecosystem, the ability for different systems to communicate is a major hurdle. Interoperability depends on the use of common standards that define how data should be identified and exchanged. When different organizations agree on a specific format for their identifiers, they can share information with much greater ease. This is the foundation of the modern internet, where millions of independent servers work together to provide a unified experience for the user. A sequence like 6v5m4xw follows these universal patterns of data construction.

Standardization also encourages innovation by allowing smaller developers to build tools that work with existing platforms. If the rules for data identification are open and well-documented, anyone can create an application that integrates with a major service. This creates a more competitive and vibrant marketplace, where the best ideas can succeed regardless of the size of the company behind them. However, achieving this level of cooperation requires a commitment to long-term planning and a willingness to prioritize the collective efficiency of the network over proprietary interests.

The Future of Alphanumeric Identifiers

Looking toward the future, the methods we use to identify digital information are likely to become even more sophisticated. We are seeing a move toward “decentralized identifiers” (DIDs), which allow individuals and devices to manage their own identities without relying on a central authority. This shift toward decentralization has the potential to enhance privacy and give users more control over their personal data. In such a system, identifiers would be cryptographically linked to the user, providing a level of security and autonomy that is not possible with traditional centralized databases.

Additionally, the rise of the Internet of Things (IoT) means that billions of new devices will soon be connected to the internet. Each of these devices—from smart thermostats to industrial sensors—will require its own unique identity to function correctly within the network. This will create a massive demand for new, even more complex alphanumeric sequences. As we navigate this transition, the principles of uniqueness, speed, and security will remain the guiding stars for the engineers and developers who build the digital infrastructure of tomorrow.

Comparison of Identification Methods

| Method Type | Primary Use Case | Key Advantage | Implementation Example |

| Sequential | Small, local databases | Simplicity and readability | Order #101, #102 |

| Alphanumeric | Web systems and APIs | High entropy, compact size | 6v5m4xw |

| UUID | Distributed systems | Guaranteed global uniqueness | 550e8400-e29b-41d4 |

| Hashed | Security and integrity | One-way transformation | SHA-256 signatures |

| Biometric | User authentication | Inherent and unchangeable | Fingerprint/Iris scan |

Frequently Asked Questions

Why do some identifiers contain both letters and numbers?

Using both letters and numbers (alphanumeric) increases the number of unique combinations possible for a given string length. This allows for a much larger “address space” compared to using numbers alone.

Can an identifier like 6v5m4xw be used to track my personal data?

On its own, a random string is typically anonymous. However, in a backend system, it can be linked to a specific user profile. Its privacy impact depends entirely on how the specific platform manages its data associations.

What happens if two systems generate the same identifier?

This is known as a “collision.” In professional environments, developers use algorithms designed to make the probability of a collision practically zero. If one does occur, the system usually has logic to detect and resolve the conflict.

Conclusion

The exploration of numeric and alphanumeric identifiers like 6v5m4xw highlights the meticulous design and engineering that underpin our modern digital existence. These strings are far more than just “filler” or random noise; they are the fundamental building blocks of data organization, security, and system performance. As our world becomes increasingly reliant on complex networks and automated processes, the role of these unique signatures will only expand. They provide the necessary structure to manage trillions of data points, ensuring that the right information reaches the right place at the right time.

From the developer’s perspective, choosing the right identification strategy is a critical decision that impacts the scalability and security of an entire application. From the user’s perspective, these identifiers work silently in the background, enabling the seamless digital experiences we have come to expect. Whether we are discussing the evolution of indexing, the security of API tokens, or the future of decentralized identity, the central theme remains the same: the need for precise, reliable, and efficient ways to label our digital world. By appreciating the logic behind these codes, we gain a deeper understanding of the sophisticated systems that drive the twenty-first century.

Tech

How to Fix Connectivity Issues HSSGamepad Fast

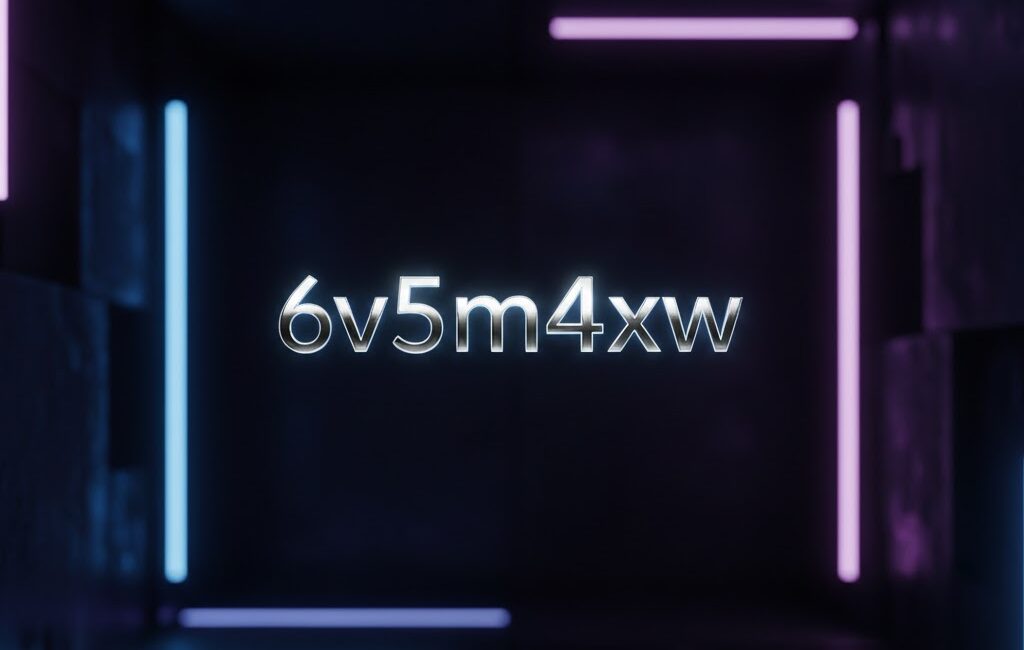

Maintaining a stable link between your controller and your gaming setup is essential for a seamless experience. When you encounter connectivity issues hssgamepad, it can disrupt your momentum and cause frustration during critical gameplay moments. This guide provides a comprehensive look at how to identify and resolve these technical hurdles without unnecessary complexity. By following a structured approach to hardware and software checks, you can ensure your device performs reliably every time you power it up. Troubleshooting these problems requires a bit of patience and a systematic look at both physical components and digital settings. Whether you are playing on a console or a high performance PC, the fundamentals of signal stability remain the same. We will explore the common culprits behind dropped signals and unresponsive buttons to help you get back into the action as quickly as possible.

Initial Hardware Assessment

Before diving into deep software configurations, it is vital to examine the physical state of your equipment. Often, the root cause of a failing connection is as simple as a loose cable or a depleted battery. If you are using a wired setup, inspect the USB ports on both the controller and the console or PC for any signs of debris or physical damage. Dust buildup can frequently prevent a clean data transfer, leading to intermittent signals. For those utilizing a wireless connection, ensure that the internal batteries are fully charged or that fresh replacements are inserted. Low power levels often result in a weak signal that drops frequently during intense sessions.

Additionally, consider the quality of the cables being used. Generic charging cables sometimes lack the data transfer capabilities required for high speed gaming peripherals. Swapping out your current wire for a high quality, shielded USB cable can immediately eliminate lag and disconnects. If the device has been dropped or exposed to moisture, internal components might be compromised, which would require professional repair. Starting with these basic physical checks saves time and prevents you from troubleshooting software issues that may not actually exist. Always ensure that the ports are tight and that there is no visible corrosion on the connectors to maintain a steady flow of information between the devices.

Wireless Interference Management

In a modern home filled with various electronic devices, signal interference is a common culprit for pairing failures. Many wireless peripherals operate on frequencies that overlap with household appliances like microwave ovens, cordless phones, and even high speed routers. To improve the stability of your connection, try to minimize the number of active Bluetooth devices in the immediate vicinity. If your gaming station is surrounded by smart home hubs or multiple smartphones, these signals can collide and cause the controller to lose its sync with the receiver. Switching your router to a different channel can also alleviate some of the congestion on the 2.4GHz band.

Physical barriers also play a significant role in signal strength. Metal desks, thick walls, or even glass cabinets can degrade the wireless wave as it travels from the handheld device to the console. Position your receiver in an open area with a direct line of sight to your seating position. If you are using a USB dongle, plugging it into a front facing port rather than the back of a computer tower can provide a much clearer path for the signal. Reducing the distance between the controller and the host device is often the quickest way to stabilize a flickering connection and ensure that every button press is registered without any noticeable delay.

Driver and Firmware Updates

Software serves as the bridge between your hardware and the operating system. If this bridge is outdated, you will likely experience significant bugs or a total lack of recognition by your computer. Manufacturers frequently release firmware updates to patch security holes and improve compatibility with new gaming titles. Visit the official support page for your device to check if there is a newer version of the firmware available. Installing these updates can recalibrate the internal sensors and communication protocols, often fixing persistent bugs that caused previous failures. This process usually involves connecting the device via a cable to a computer and running a small utility.

On the PC side, the operating system relies on specific drivers to translate button presses into in game actions. Open your device manager and look for any warning icons next to the game controller entry. If the system lists the device as unknown, you may need to manually uninstall the current driver and let the system rediscover the hardware. In some cases, using a generic HID driver provided by the OS is sufficient, but specialized software from the manufacturer usually offers better calibration tools. Keeping your software environment current ensures that your hardware can communicate effectively with the latest game engines and prevents crashes during launch.

Operating System Configuration

Sometimes the settings within your Windows or console environment are configured in a way that limits peripheral performance. Power saving modes are a frequent offender in this category. To conserve energy, many operating systems are programmed to turn off USB ports or Bluetooth radios if they perceive a period of inactivity. This can lead to the controller shutting down unexpectedly during a cutscene or a pause in the action. Adjusting your power plan to high performance or disabling the USB selective suspend setting can keep the connection alive indefinitely. This ensures the port remains powered regardless of the system state.

Furthermore, ensure that your OS is fully updated. Recent patches for Windows or console system software often include fixes for Bluetooth stacks and peripheral handling. If you are using a PC, check the privacy settings to ensure that apps have permission to access your radio and game controllers. In some instances, background applications or third party overlay software can conflict with the controller input. Performing a clean boot can help identify if another program is intercepting the signal or causing the driver to crash during use. Eliminating these background conflicts is a key step in creating a stable environment for any gaming peripheral.

Sync and Pairing Protocols

The process of establishing a handshake between a controller and its host requires both devices to be in the correct mode simultaneously. If the pairing sequence is interrupted or timed out, the devices may remember a partial or corrupted profile. To fix this, you should completely remove the device from your Bluetooth list or saved peripherals. Once the old profile is deleted, restart both the controller and the host system. This clear slate allows for a fresh synchronization process that often bypasses previous handshake errors. It is a simple reset that forces the hardware to renegotiate the connection terms.

When initiating the sync, refer to the LED indicators on the device. Most gamepads have specific flashing patterns that signify they are in discovery mode. If the lights do not behave as expected, the internal pairing button might be stuck or unresponsive. Some devices also feature a small reset pinhole on the underside. Using a small tool to press this button can restore the hardware to its factory settings, wiping out any glitched configurations. Once reset, the device should be much easier to pair with your preferred gaming platform. Ensure that no other devices are trying to pair at the same moment to avoid confusion during the handshake.

Internal Settings and Calibration

Once a connection is established, you might still face issues with input lag or unresponsive buttons. This is often a matter of calibration rather than a total loss of signal. Most modern gaming platforms include a built in calibration wizard that allows you to test the dead zones and sensitivity of your thumbsticks and triggers. If the software perceives the stick as moving even when you are not touching it, adjusting the dead zone can stabilize the character movement. This ensures that the data being sent over the connection is accurate and useful. Proper calibration can make an old controller feel brand new.

Inside individual games, there are often specific settings for controller types. Some titles might default to a different input method, causing your gamepad to seem unresponsive. Ensure the game is set to recognize external controllers as the primary input. If you are using a wrapper or an emulator to make your device compatible with older titles, double check the mapping settings. A single misconfigured button in the mapping software can make it seem like the device has disconnected when, in reality, it is simply sending commands that the game does not recognize. Fine tuning these settings provides a tailored experience for every genre you play.

Advanced Troubleshooting and Repair

If all the above steps fail to provide a stable link, there may be a deeper issue within the hardware circuitry. Over time, the internal antenna or the USB port solder joints can weaken due to repetitive use. If you are comfortable with electronics, you can check for loose connections inside the casing, but for most users, this is the point where manufacturer support becomes necessary. Checking the warranty status of your device is a smart move before attempting any DIY repairs that might void your coverage. Internal component failure is rare but can occur after years of heavy use or accidental damage.

Sometimes, the issue is not the gamepad at all, but the host device’s internal Bluetooth card or USB controller. Testing the gamepad on a different computer or console can help isolate where the fault lies. If the device works perfectly on a secondary system, you know the problem is localized to your primary gaming rig. This might mean you need to invest in a dedicated Bluetooth adapter or a new USB hub to handle the data load. Identifying the specific point of failure prevents you from replacing a perfectly functional controller when the real issue is a faulty port on your PC or console system.

Technical Specification Table

| Connection Component | Optimal State | Troubleshooting Action |

| Battery Health | Full Charge | Replace or recharge cells |

| Signal Distance | Within 2 meters | Remove physical obstacles |

| USB Port Type | USB 3.0 or higher | Switch to a direct port |

| Software Version | Latest Firmware | Run manufacturer update tool |

| Interference Level | Low 2.4GHz noise | Turn off nearby Bluetooth |

| Driver Status | WHQL Certified | Reinstall via Device Manager |

FAQs

Why does the device keep disconnecting during gameplay?

This is often caused by power saving settings in your operating system. Make sure that the USB selective suspend feature is turned off so the port stays active.

Can a low battery affect the signal quality?

Yes, as the battery dies, the wireless transmitter has less power to send signals, which leads to dropped inputs and intermittent connection issues.

Is it better to use a wired or wireless connection?

A wired connection is generally more stable and offers lower latency. If you are experiencing interference, switching to a high quality cable is the best solution.

How do I reset the internal memory of the controller?

Most devices have a small pinhole on the back. Inserting a paperclip for ten seconds will usually reset the pairing memory to factory defaults.

Will other Bluetooth devices interfere with my gamepad?

Yes, devices like wireless headsets and smartphones can occupy the same frequency. Keeping these away from your receiver can help stabilize the link.

Conclusion

Resolving connectivity issues hssgamepad is a process of elimination that starts with the simplest physical factors and moves toward complex software adjustments. By ensuring your hardware is clean, your batteries are charged, and your drivers are up to date, you can fix the majority of common problems. Remember to keep the path between your controller and receiver clear of obstructions and other wireless signals to maintain the best possible performance. If you find that the problem persists across multiple different devices, it may be time to look into a hardware replacement or professional repair services. However, most users find that a simple reset or a change in USB ports is enough to clear up any lag or signal drops. Staying proactive with firmware updates and maintaining a clean gaming environment will ensure that your equipment lasts for years to come. With a bit of patience and systematic testing, you can return to your gaming sessions with a reliable and responsive setup that won’t let you down during intense matches. Achieving a perfect link is the first step toward a competitive and enjoyable experience in any digital world you choose to explore.

Tech

Tomleonessa679: Deep Dive into Digital Identity Management

Introduction

In the current era of hyper-connectivity, digital identifiers like tomleonessa679 serve as more than just usernames; they are the cornerstones of online identity. As the boundary between the physical and digital worlds continues to blur, the way individuals and entities present themselves online has become a critical factor in professional and personal success. Navigating this space requires a sophisticated understanding of how data, social interaction, and search visibility intersect. Every digital footprint tells a story, and for those operating in specialized niches, maintaining a consistent and professional image is paramount.

The evolution of the internet has moved us toward a state where our virtual presence often precedes our physical arrival. Whether it is through a professional network, a social media handle, or a specific project identifier, these tags allow for streamlined discovery in a sea of information. This article provides a comprehensive exploration of the mechanics behind digital identity management. We will examine how strategic positioning, technical optimization, and authentic engagement contribute to a robust online profile. By understanding these dynamics, one can better manage the various facets of their digital life, ensuring that identifiers like tomleonessa679 remain synonymous with quality and reliability.

The Architecture of Online Identifiers

The structure of a digital identifier often reflects a blend of personal preference and functional necessity. In many cases, these alphanumeric strings are designed to be unique, memorable, and easily searchable across multiple platforms. The goal is to create a unified presence that allows users to transition from one service to another without losing the context of who they are interacting with. This cross-platform consistency is vital for building trust. When an audience sees a familiar handle, it reinforces the sense of a cohesive brand or personality, rather than a fragmented series of accounts.

Technically, these identifiers serve as keys within vast databases, linking content, preferences, and history to a single point of origin. This allows for a personalized experience where the platform can serve relevant information based on past interactions. For the creator, this means that every piece of content shared under a specific name contributes to a growing body of work. Over time, this cumulative effect builds authority. It is not just about the individual posts, but about the aggregate value of the identity as a whole, which becomes an asset in its own right in the digital economy.

Strategies for Enhancing Search Visibility

Visibility in the digital space is largely dictated by how well an identity aligns with the requirements of modern search algorithms. These algorithms are designed to reward content that is relevant, authoritative, and user-friendly. To enhance visibility, it is essential to ensure that the identifier is associated with high-quality, original material. This involves more than just keyword placement; it requires a holistic approach to content creation where the primary focus is on solving problems or providing unique insights for the audience.

Another key aspect of visibility is the concept of “connectedness.” Search engines look for signals that an identity is recognized by other reputable sources. This is achieved through natural mentions, collaborations, and the creation of shareable assets. When other platforms link to or discuss a specific digital entity, it serves as a vote of confidence in the eyes of the algorithm. This organic growth is far more valuable than artificial attempts to manipulate rankings. By staying focused on genuine engagement and the consistent delivery of value, a digital profile can achieve a high level of prominence without relying on aggressive marketing tactics.

The Impact of Social Proof on Professional Reputation

In the online world, reputation is often measured through social proof. This includes metrics like engagement rates, testimonials, and the general sentiment expressed by the community. A positive reputation acts as a magnet, attracting new opportunities and fostering a loyal following. Conversely, a lack of transparency or a history of inconsistent behavior can quickly erode trust. Maintaining a professional reputation requires a commitment to honesty and a willingness to engage openly with the audience. It is about being a reliable source of information and a respectful participant in digital conversations.

Social proof is also self-reinforcing. As more people interact positively with a digital entity, others are more likely to view it as credible. This creates a virtuous cycle of growth and influence. For those managing a professional identity, this means that every interaction is an opportunity to strengthen their standing. Whether it is answering a question, sharing a resource, or acknowledging feedback, these small actions contribute to a larger narrative of expertise and accessibility. In a crowded marketplace, the most trusted voices are usually the ones that have consistently demonstrated their value over time.

Navigating the Challenges of Privacy and Security

With increased visibility comes an increased need for robust security measures. Protecting a digital identity is not just about safeguarding personal data; it is about protecting one’s professional integrity. Cyber threats are becoming more sophisticated, ranging from simple phishing attempts to complex identity theft schemes. Implementing multi-factor authentication, using encrypted communication channels, and regularly auditing account permissions are essential practices for anyone with a significant online presence. Security should be viewed as a foundational element of digital strategy rather than an afterthought.

Privacy is equally important. In an age where data is often traded as a commodity, being mindful of what is shared and with whom is vital. This involves understanding the privacy settings of various platforms and being selective about the personal details revealed in public forums. A well-managed digital identity strikes a balance between being accessible and being protected. By maintaining clear boundaries and using secure tools, individuals can enjoy the benefits of a global platform without exposing themselves to unnecessary risks. Trust is hard to build but easy to lose, and a single security breach can have a devastating impact on a hard-earned reputation.

Adapting to Shifts in Digital Communication

The way we communicate online is constantly evolving, driven by changes in technology and shifts in user behavior. We have moved from simple text-based interactions to a multimedia-rich environment where video, audio, and interactive elements are the norm. Adapting to these changes requires a flexible mindset and a willingness to learn new tools and formats. For instance, an identity that was built on long-form writing might need to incorporate short-form updates or visual storytelling to remain relevant to a changing audience.

This adaptation is not about chasing every trend, but about finding the best ways to deliver a message in the current landscape. It is about understanding where the audience is spending their time and how they prefer to consume information. By being responsive to these shifts, a digital entity can maintain its relevance and continue to grow. This might involve experimenting with new platforms, adopting artificial intelligence for content optimization, or exploring immersive technologies. The key is to remain true to the core values of the identity while being open to the diverse ways those values can be expressed.

Data Analysis as a Tool for Improvement

Data is a powerful ally in the quest for digital growth. By analyzing how users interact with content, one can gain valuable insights into what works and what doesn’t. This includes tracking which topics generate the most interest, which times of day are best for posting, and which formats lead to the highest levels of engagement. This quantitative approach allows for informed decision-making, moving away from guesswork toward a strategy backed by evidence. It is a process of constant refinement where small adjustments can lead to significant improvements over time.

However, data should be used to supplement, not replace, human intuition and creativity. While the numbers can tell you “what” is happening, they often struggle to explain “why.” Combining data analysis with qualitative feedback—such as direct comments and community discussions—provides a more complete picture of the audience’s needs and perceptions. This balanced approach ensures that the digital strategy remains both efficient and empathetic. By using data to identify opportunities and human insight to execute them, a digital profile can achieve a level of sophistication that sets it apart from the competition.

Long-Term Sustainability in the Virtual Space

Sustainability in the digital realm is about building a presence that can withstand the test of time. This requires a focus on quality over quantity and a commitment to long-term goals rather than short-term gains. A sustainable digital identity is built on a foundation of evergreen content—information that remains valuable and relevant long after it is first published. This creates a reliable source of traffic and engagement that doesn’t rely on the whims of trending topics or viral moments.

Furthermore, sustainability involves managing one’s energy and resources effectively. The digital world is “always on,” which can lead to burnout for those who feel the need to be constantly active. Developing a sustainable pace, automating repetitive tasks, and focusing on high-impact activities are all part of a healthy digital lifestyle. By prioritizing longevity and well-being, a creator can ensure that their online presence continues to flourish for years to come. Ultimately, the goal is to create a digital legacy that reflects a career of hard work, continuous learning, and genuine contribution to the global conversation.

Summary of Digital Management Principles

| Principle | Description | Objective |

| Consistency | Using the same handles and themes across platforms. | Build brand recognition and trust. |

| Technical Health | Ensuring sites and profiles are secure and fast. | Improve user experience and search ranking. |

| Value-First Content | Creating material that solves problems for users. | Establish authority and earn organic traffic. |

| Security Hygiene | Implementing strong passwords and 2FA. | Protect reputation and personal data. |

| Strategic Engagement | Interacting authentically with the community. | Foster loyalty and improve social proof. |

FAQs

What is the importance of a unique identifier like tomleonessa679?

A unique identifier helps in distinguishing a person or brand in a crowded digital marketplace. It ensures that content and professional achievements are correctly attributed to the right entity, facilitating easier discovery by search engines and users.

How can someone maintain a professional tone without being boring?

The key is to focus on providing genuine value and sharing unique perspectives. Using clear, concise language and addressing the specific needs of the audience allows for a professional yet engaging style that resonates with readers.

Are there specific tools recommended for managing digital security?

While specific brands change, the focus should be on using password managers, enabling multi-factor authentication on all platforms, and utilizing VPNs when working on public networks. Staying updated on the latest security trends is also a vital “tool” for protection.

How often should a digital profile be updated?

Consistency is more important than frequency. It is better to have a regular, manageable schedule (such as once a week) than to post multiple times a day for a week and then disappear for a month. A steady flow of quality content keeps the audience engaged and signals to search engines that the profile is active.

Conclusion

The journey of managing a digital identity like tomleonessa679 is an ongoing process of growth, adaptation, and refinement. As we have seen, success in the online world is not the result of a single action but the culmination of many deliberate choices. From the technical details of search engine optimization to the human elements of community engagement and reputation management, every factor plays a role in shaping how an entity is perceived. By staying focused on quality and integrity, one can build a presence that is not only visible but also respected and influential.

As technology continues to advance, the tools and platforms we use will change, but the fundamental principles of communication and trust will remain the same. The digital landscape offers a world of opportunity for those who are willing to approach it with a strategic mindset and a commitment to excellence. Whether you are at the beginning of your digital journey or looking to enhance an existing presence, the path forward is defined by a dedication to providing value and protecting your professional standing. By embracing these challenges, you can ensure that your digital identity remains a powerful asset in an increasingly interconnected world.

Tech

The Role of Audio Video Systems in Modern Work and Living Spaces

The way we work and live has changed significantly over the past decade. Open-plan offices have given way to hybrid setups. Living rooms have become home theaters, home offices, and everything in between. At the center of this shift sits one critical element: audio video technology.

When AV systems work well, you barely notice them. When they don’t, everything grinds to a halt—a dropped call mid-presentation, a lagging screen during movie night, a conference room where nobody can hear the person speaking. Getting it right matters more than most people realize.

Enhancing Connectivity for Remote Collaboration

Clear audio and sharp video aren’t luxuries in a hybrid work environment—they’re prerequisites. Muffled sound and pixelated screens erode trust, slow down decision-making, and make remote participants feel like afterthoughts.

High-quality AV systems solve this by creating consistent, reliable communication across physical and virtual spaces. Whether it’s a boardroom equipped with ceiling microphones and 4K displays or a home office with professional-grade speakers, the right setup ensures everyone in the room—and on the screen—can engage equally.

Sound Effects Arizona specializes in designing these kinds of integrated environments, tailoring AV solutions to the specific demands of each space and the people using it.

Smart Living: AV in the Home

Home AV has evolved far beyond a mounted TV and a soundbar. Today’s integrated systems connect lighting, streaming, multi-room audio, and smart displays into a single, easy-to-manage experience.

For families, this means less friction—one app to control the living room, the kids’ rooms, and the backyard speakers. For professionals working from home, it means seamless transitions between a focused work environment in the morning and a relaxing entertainment setup in the evening.

Sound Effects Arizona brings this kind of thoughtful integration to residential spaces, designing systems that feel intuitive from day one. The goal isn’t complexity—it’s clarity. Every component should serve the people using it.

AV in High-Stakes Financial Environments

In financial settings, precision is non-negotiable. Trading floors, advisory suites, and executive conference rooms all rely on real-time data, crystal-clear communication, and zero tolerance for technical failure.

AV infrastructure in these environments needs to be more than functional—it needs to be intelligent. Multi-display video walls, low-latency audio, and seamless integration with existing data systems are all part of the equation. A single point of failure can have consequences that ripple far beyond a missed meeting.

Professional AV providers understand this. They design with redundancy, security, and performance in mind, ensuring that the technology supports the work rather than interrupting it.

The Future: AI-Driven AV Automation

AI is beginning to reshape how AV systems operate. Rooms that automatically adjust audio levels based on occupancy, cameras that track speakers without manual input, and systems that learn usage patterns to optimize performance—these are no longer concepts. They’re available now, and adoption is accelerating.

For businesses and homeowners alike, AI-driven automation means less time managing technology and more time using it. Sound Effects Arizona stays ahead of these developments, integrating forward-thinking solutions that won’t feel outdated in two years.

Investing in the Right AV Foundation

Professional AV integration isn’t just a technical decision—it’s a long-term investment in how a space functions. Cutting corners on installation or equipment often leads to costly fixes down the line, not to mention the daily frustration of systems that underperform.

Working with experienced integrators from the start ensures that every component is selected, installed, and calibrated for the specific environment. The result is a space that works the way it should, every time.